The ultra-optimized infrastructure for AI workloads

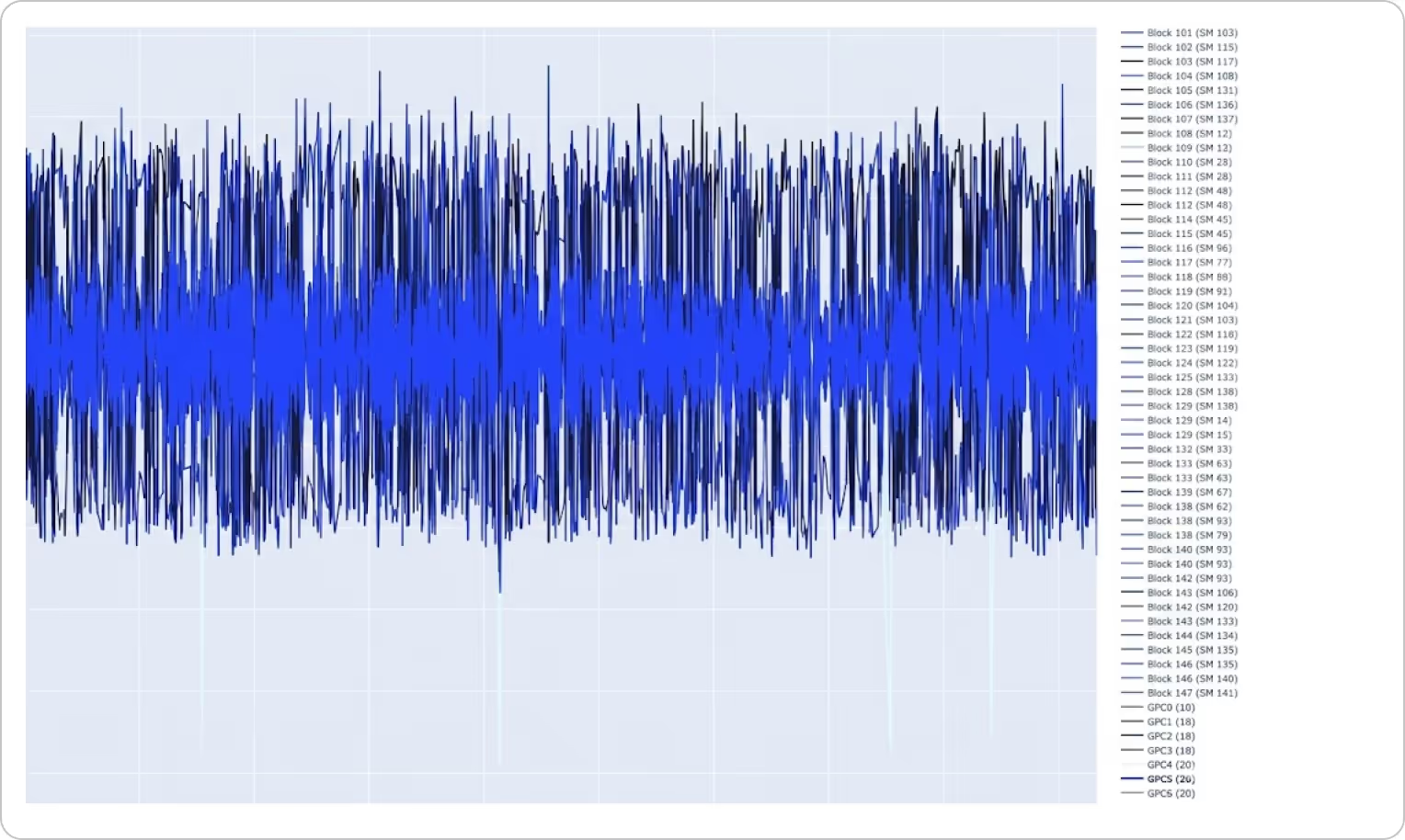

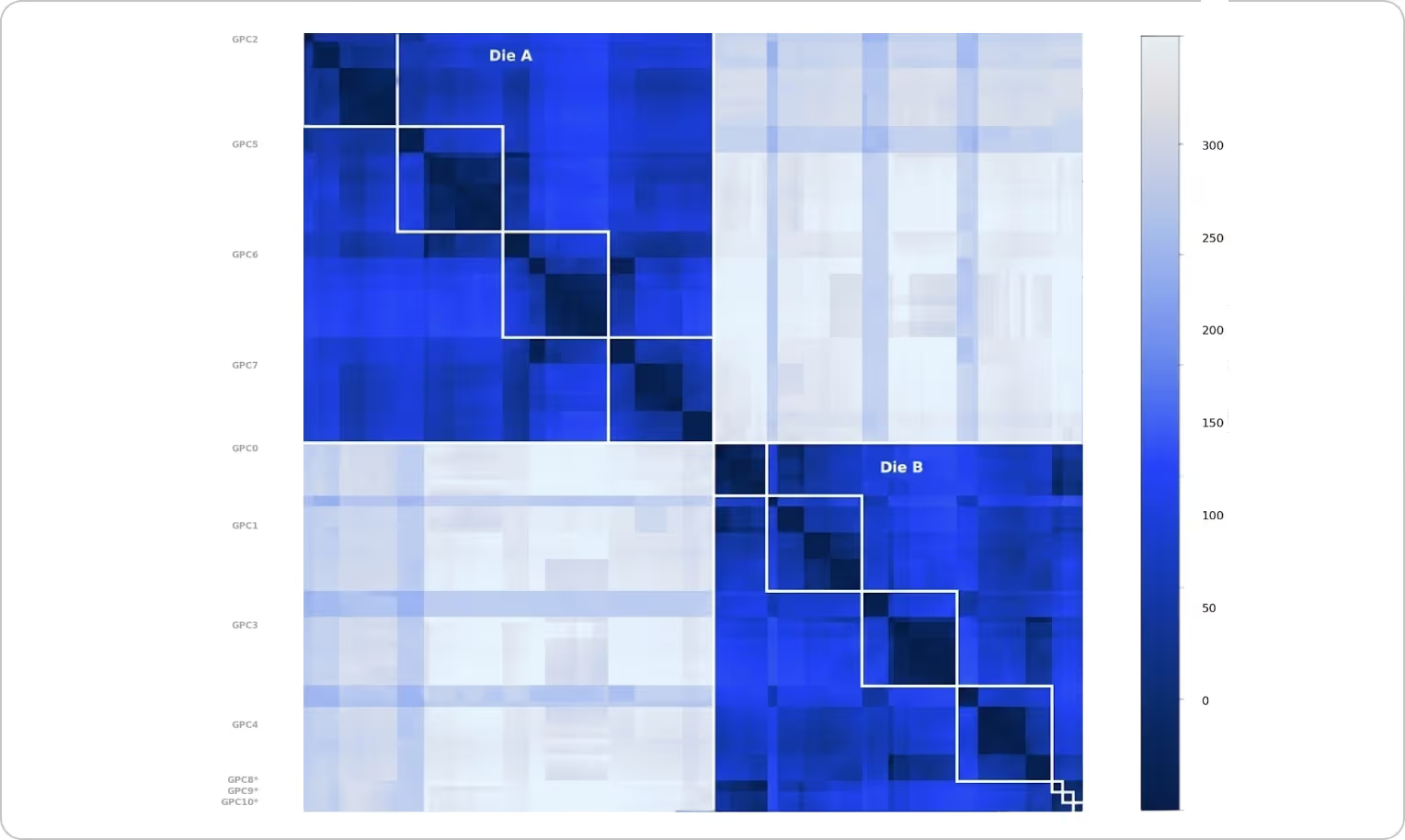

The Decart Optimization Stack (DOS) is a vertically integrated inference and training platform for LLM, agentic, video, and world model workloads. It spans hardware-aware model design, kernel tooling, proprietary compilers, and inference optimization and integrates into your existing infrastructure.

We work with hyperscalers, chip manufacturers and AI labs to extract maximum performance from their most important workloads — across GPUs, TPUs, Trainium, AMD, and other accelerators.

What you get

The standard AI stack was built for one-prompt, one-output workflows. DOS is a different architecture built for the latency, throughput, and cost requirements of continuous, real-time AI workloads.

Faster time to production

Compress months of low-level tuning into weeks using a production-validated optimization playbook.

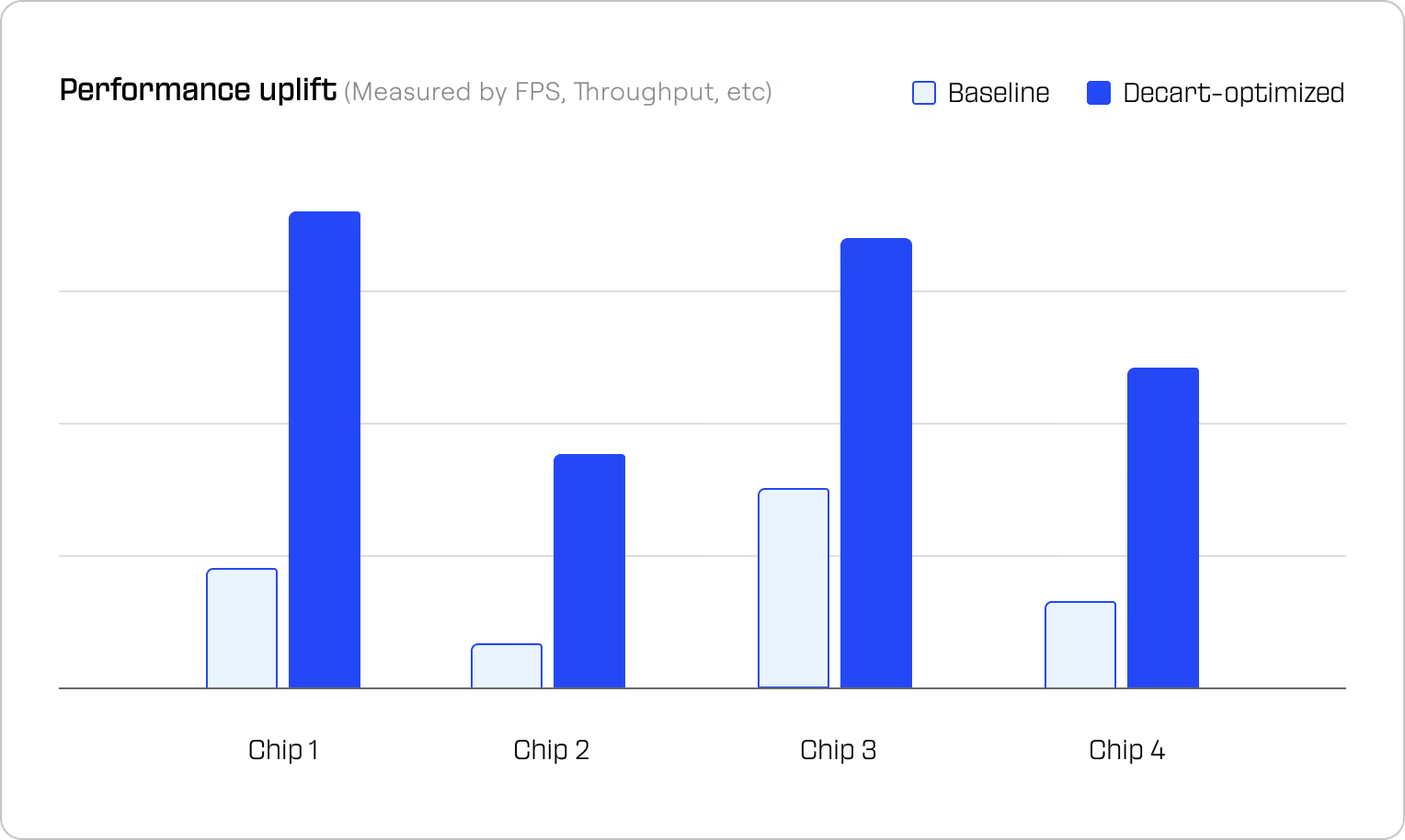

Full hardware utilization

Extract peak performance from every chip across inference and training and hardware generations.

Significant cost reduction

Order-of-magnitude efficiency gains that translate directly into lower TCO — and compound as models improve.

We're building the infrastructure AI runs on. Join to build with us.

Whether you're looking to run a scoped milestone-based pilot or explore a long-term strategic partnership – we'd love to understand your workload and show you what's possible.